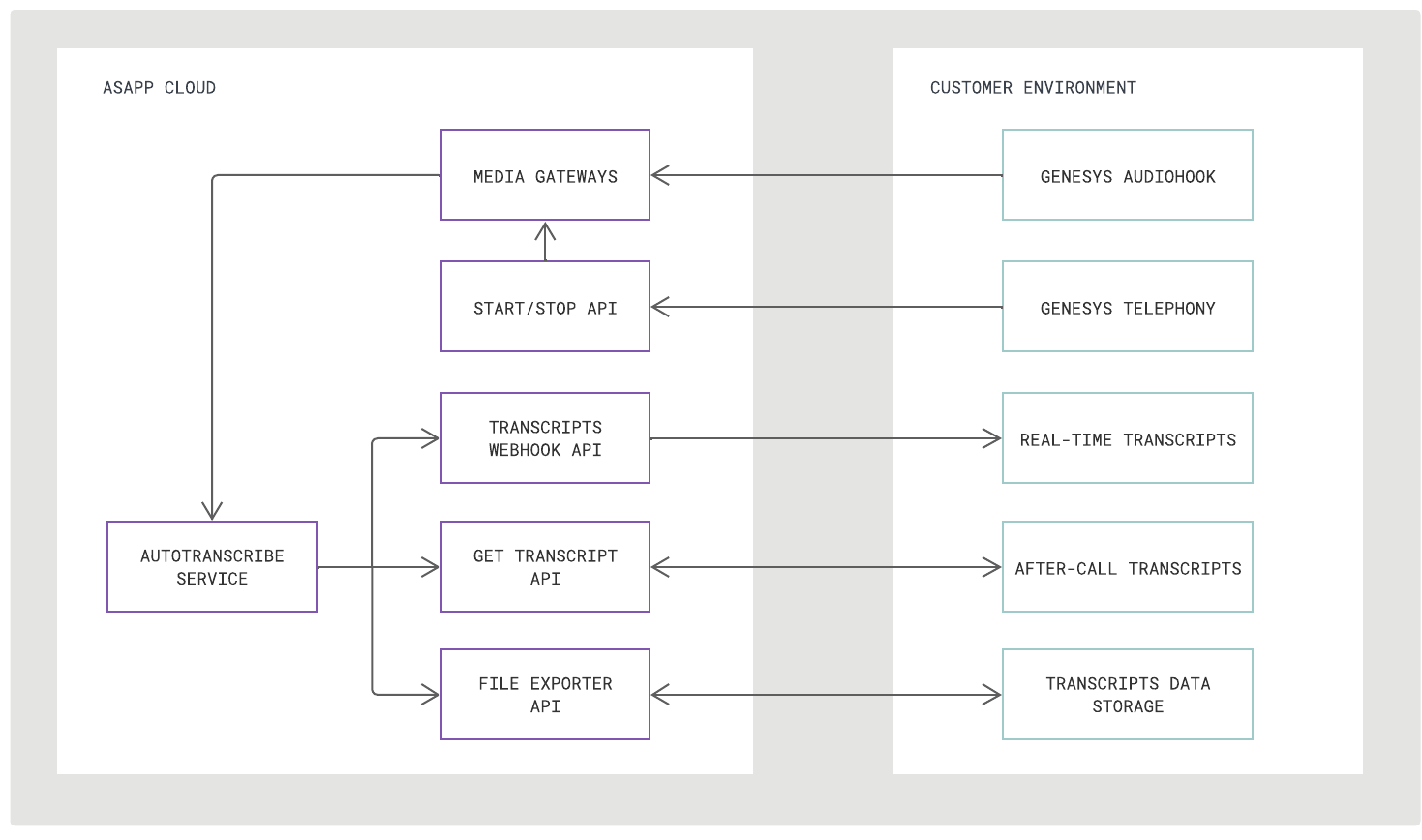

- Media gateways for receiving call audio from Genesys Cloud

- HTTPS API which enables the customer to POST requests to start and stop call transcription

- Webhook to POST real-time transcripts to a designated URL of your choosing, alongside two additional APIs to retrieve transcripts after-call for one or a batch of conversations

Integration Steps

There are three steps to integrate AutoTranscribe into Genesys Audiohook:- Enable AudioHook and Configure for ASAPP

- Send Start and Stop Requests

- Receive Transcript Outputs

Requirements

Audio Stream Codec Genesys AudioHook provides audio in the mu-law format with 8000 sample rate, which is supported by ASAPP. No modification or additional transcoding is needed when forking audio to ASAPP.When supplying recorded audio to ASAPP for AutoTranscribe model training prior to implementation, send uncompressed .WAV media files with speaker-separated channels.

- Access relevant API documentation (e.g. OpenAPI reference schemas)

- Access API keys for authorization

- Manage user accounts and apps

Visit the Get Started for instructions on creating a developer account, managing teams and apps, and setup for using AI Service APIs.

Integrate with Genesys AudioHook

1. Enable AudioHook and configure for ASAPP

To enable AudioHook within Genesys:- Access Genesys Cloud Admin, navigate to Integrations/Integrations and click “plus” in upper right to add more integrations.

- Find AudioHook Monitor and Install.

- Configure AudioHook Monitor Integration, using the Connection URI (i.e. wss://ws-example.asapp.com/mg-genesysaudiohook-autotranscribe/) and credentials provided by ASAPP.

- Enable voice transcription on desired trunks and within desired Architect Flows. You do not need to select ASAPP as the transcription engine.

2. Send Start and Stop Requests

The/start-streaming and /stop-streaming endpoints of the Start/Stop API are used to control when transcription occurs for every call media stream (identified by the Genesys conversationId) sent to ASAPP’s media gateway. See the Endpoints section to learn how to interact with them.

ASAPP will not begin transcribing call audio until requested to, thus preventing transcription of audio at the very beginning of the Genesys AudioHook audio streaming session, which may include IVR, hold music, or queueing.

Stop requests are used to pause or end transcription for any needed reason. For example, a stop request could be used mid-call when the agent places the call on hold or at the end of the call to prevent transcribing post-call interactions such as satisfaction surveys.

3. Receive Transcript Outputs

AutoTranscribe outputs transcripts using three separate mechanisms, each corresponding to a different temporal use case:- Real-time: Webhook posts complete utterances to your target endpoint as they are transcribed during the live conversation

- After-call: GET endpoint responds to your requests for a designated call with the full set of utterances from that completed conversation

- Batch: File Exporter service responds to your request for a designated time interval with a link to a data feed file that includes all utterances from that interval’s conversations

Real-Time via Webhook

ASAPP sends transcript outputs in real-time via HTTPS POST requests to a target URL of your choosing. Authentication Once the target is selected, work with your ASAPP account team to implement one of the following supported authentication mechanisms:- Custom CAs: Custom CA certificates for regular TLS (1.2 or above).

- mTLS: Mutual TLS using custom certificates provided by the customer.

- Secrets: A secret token. The secret name is configurable as is whether it appears in the HTTP header or as a URL parameter.

- OAuth2 (client_credentials): Client credentials to fetch tokens from an authentication server.

/start-streaming endpoint, AutoTranscribe begins to publish transcript messages, each of which contains a full utterance for a single call participant.

The expected latency between when ASAPP receives audio for a completed utterance and provides a transcription of that same utterance is 200-600ms.

Perceived latency will also be influenced by any network delay sending audio to ASAPP and receiving transcription messages in return.

transcript type messages is JSON encoded with these fields:

| Field | Subfield | Description | Example Value |

|---|---|---|---|

| externalConversationId | Unique identifier with the Genesys conversation Id for the call | 8c259fea-8764-4a92-adc4-73572e9cf016 | |

| streamId | Unique identifier assigned by ASAPP to each call participant’s stream returned in response to /start-streaming and /stop-streaming | 5ce2b755-3f38-11ed-b755-7aed4b5c38d5 | |

| sender | externalId | Customer or agent identifier as provided in request to /start-streaming | ef53245 |

| sender | role | A participant role, either customer or agent | customer, agent |

| autotranscribeResponse | message | Type of message | transcript |

| autotranscribeResponse | start | The start ms of the utterance | 0 |

| autotranscribeResponse | end | Elapsed ms since the start of the utterance | 1000 |

| autotranscribeResponse | utterance | Transcribed utterance text | Are you there? |

transcript message format:

Error Handling

Should your target server return an error in response to a POST request, ASAPP will record the error details for the failed message delivery and drop the message.After-Call via GET Request

AutoTranscribe makes a full transcript available at the following endpoint for a given completed call:GET /conversation/v1/conversation/messages

Once a conversation is complete, make a request to the endpoint using a conversation identifier and receive back every message in the conversation.

Message Limit

This endpoint will respond with up to 1,000 transcribed messages per conversation, approximately a two-hour continuous call. All messages are received in a single response without any pagination.

To retrieve all messages for calls that exceed this limit, use either a real-time mechanism or File Exporter for transcript retrieval.

Transcription settings (e.g. language, detailed tokens, redaction), for a given call are set with the Start/Stop API, when call transcription is initiated. All transcripts retrieved after the call will reflect the initially requested settings with the Start/Stop API.

Batch via File Exporter

AutoTranscribe makes full transcripts for batches of calls available using the File Exporter service’sutterances data feed.

The File Exporter service is meant to be used as a batch mechanism for exporting data to your data warehouse, either on a scheduled basis (e.g. nightly, weekly) or for ad hoc analyses. Data that populates feeds for the File Exporter service updates once daily at 2:00AM UTC.

Use Case Example

Real-Time Transcription This real-time transcription use case example consists of an English language call between an agent and customer with redaction enabled, ending with a hold. Note that redaction is enabled by default and does not need to be requested explicitly.- Ensure the Genesys AudioHook is enabled and configured on the desired trunk and flow.

-

When the customer and agent are connected, send ASAPP a request to start transcription for the call:

POST

/mg-autotranscribe/v1/start-streamingRequest

-

The agent and customer begin their conversation and separate HTTPS POST

transcriptmessages are sent for each participant from ASAPP’s webhook publisher to a target endpoint configured to receive the messages. HTTPS POST for Customer Utterance

-

Later in the conversation, the agent puts the customer on hold. This triggers a request to the

/stop-streamingendpoint to pause transcription and prevents hold music and promotional messages from being transcribed. POST/mg-autotranscribe/v1/stop-streamingRequest